In today’s rapidly evolving business landscape, organizations face an ever-increasing array of risks and compliance challenges. As businesses strive to adapt to the digital age, it has become imperative to enhance their Governance, Risk Management, and compliance (GRC) strategies. Fortunately, the fusion of artificial intelligence (AI) and GRC practices presents a transformative opportunity.

TrustCloud teamed up with the experts to discuss:

- Current state of AI

- How to leverage AI to improve GRC

- The continuing evolution of AI

Speakers include:

Walter Haydock, Founder and Chief Executive Officer at Stackaware

Frank Kyazze, Privacy Director at TrustCloud

Read on to see what they had to say, or check out their conversation on Youtube.

The Current State Of AI

AI is nothing new under the sun. It’s really been brought into popular attention and focus with the release of chat GPT last year by OpenAI. There are a large number of organizations that are using some or another form of artificial intelligence, whether that’s a linear regression algorithm to predict some sort of parameter or maybe more advanced stuff using large language models. With the ability to interface with something like chat GPT, whether using the user interface or the application programming interface, organizations of any size can really leverage state-of-the-art AI. It has really been a democratization of technology.

With the democratization of anything, you need to watch out for security in the first place. One of the major challenges is the risk associated with people who are not aware of the risks of the power that they’re wielding. There are some reports of unintended training of large language models using proprietary data, and there are both security researchers who are ethical hackers and then unethical hackers using AI tools to accelerate their ability to cause mayhem. So, there are a lot of potential benefits, but also a lot of potential risks when you’re talking about AI and security. So it becomes an important point to make people aware when it comes to AI and security.

Major Security Concerns

Privacy

Different privacy concerns with large language learning models:

If we speak about privacy, it is a small subset of the general concern of data confidentiality. To ensure security, you need to answer certain questions, like,

- Can a malicious actor access our data?

- Have we configured our applications and our servers correctly?

- Is our infrastructure service provider configured correctly?

- Is the setup correct?

These are all concerns that continue with AI development.

A few examples of the potential problem of unintended training:

Example: 1) Some researchers at WIS identified a huge data trove for Microsoft’s AI research team. So, this is not an AI-specific attack, but it shows that the basics of information security do not go away even when you’re conducting AI research. So the biggest confidentiality concern would be that of unintended training. It happens when someone provides information to a large language model that is actively training on it.

Example: 2) The OpenAI ChatGPT product! When you’re using the user interface and not the application programming interface, the user interface will train by default unless you opt out of that functionality. Some employees can submit some confidential source code or some meeting notes to chat GPT with the user interface. And it’s implied that they did not turn off the default training setting on that tool, meaning that ChatGPT is aware of or has access to this confidential information.

Example: 3) A sensitive data generation! It is possible that a model that was never trained on any of your personal data can intuit that data from other information that it has.

Risks

Privacy and confidentiality concerns with AI have grown in prominence as artificial intelligence technologies continue to advance. AI systems often require access to vast amounts of data to function effectively, raising questions about how personal information is collected, stored, and used. There are concerns about the potential for AI algorithms to inadvertently reveal sensitive data, leading to privacy breaches. Moreover, the increasing use of AI in surveillance, facial recognition, and predictive analytics has raised ethical questions about individual privacy and civil liberties. Striking the right balance between harnessing the benefits of AI and protecting privacy is a critical challenge that requires careful consideration and robust safeguards.

For example, if you have submitted an opt-out request or a data erasure request under GDPR, assuming they’re subject to it, it is not clear how an organization would prevent the model from reproducing that personal data (as it’s not present in the database anywhere). The large language model algorithm is reproducing it based on other information it has. So it is a related privacy risk, and as it is a new technology, there are a lot of people who are trying to take advantage of that and breach some of these privacy measures.

One of the most prominent risks is prompt injection. It can cause serious data confidentiality impacts. Security researchers are at the bleeding edge of a lot of complex attacks chained together with chatGPT plugins. One significant problem is the amplification of biases and prejudices present in the injected prompts. So the general problem with prompt injection is that an LOM can accept basically any type of text input. This can lead to the dissemination of false information and offensive content, causing confusion, harm, or offense to individuals who come across such AI-generated responses. It also raises concerns about accountability, as it becomes challenging to attribute responsibility for the content produced, blurring the ethical boundaries of AI usage.

AI is powerful, but it has associated risks as well. It is almost impossible to mitigate attacks with prompt injection because there are an infinite number of potentially malicious ways you can interact with an LLM. Here, you can feed information into a large language model that will allow an attacker to seed a very specific set of responses into it. Normally, the model functions perfectly fine, but in a specific use case, it will provide corrupted data. For example, if you link an LLM to any of your financial transaction systems and ask an LLM to extract the account and routing number from an unstructured document and then wire money to that account, you could poison the LLM, so anytime anyone asks for a routing number and account number, then the money will always be passed to your account.

So there are a really infinite set of possibilities for how these vulnerabilities might be exploited or how people themselves can come up with ways to use them.

How To Leverage AI To Improve GRC

Assessing AI-Related Security Risks

When it comes to assessing AI risks, the National Institutes of Standards and Technology have released an AI risk management framework, AIRMS, that does it all! It is relatively comprehensive, but it’s a high-level approach for assessing risk related to artificial intelligence systems.

Some great recommendations for avoiding AI security risks are:

Identify the data retention policy of a third-party vendor. For example, in the terms and conditions for open AI, if you use the API, there’s a 30-day data retention period with some exceptions for legal holds. If you use the user interface, there is basically an indefinite retention period.

- Be aware of and understand what the data retention periods are.

- Understand what the default training settings are.

- Are you default-opted-in?

- Are you opted out?

- Do you need to opt in?

- Should you opt in?

Some of the things you should look at are:

- If you are connecting any of your databases to an AI tool, minimize the amount of information that gets sent to that tool. If you’re just providing huge amounts of information that don’t need to go into the AI system, it could lead to unintended training situations.

- Understand fully the nature of the AI system. Does it trigger any sort of requirement under any of the privacy frameworks? For example, under GDPR, there are some special restrictions on the processing of biometric data and using that for behavioral analysis. Make sure you really narrow down your requirements while dealing with AI systems.

Implementing An AI Security Risks Framework

There are a couple of different frameworks for AI security. One of the most talked-about ones is the NIST-AI RMF Risk Management Framework. And it is extremely comprehensive but also really high-level at the same time in terms of how one establishes controls in their program to assess the risk of using AI in the organization. It contains statements about human and AI interactions and the role they are going to play for GRC teams.

There are some really important points from the NIST-AI RMF:

- Human roles and responsibilities in decision-making and overseeing AI systems need to be clearly defined and differentiated. Decisions made by humans—how does that translate into AI systems? A lot of these AI services will be used for decision-making, whether you are evaluating recruits for an organization or making analytical decisions to present to leadership about what they should do next as a business. Definitely, there is a use of AI systems for decision-making, so being able to differentiate responsibility between humans and the AI systems

As a lot of these AI systems are designed to learn through use, they can learn through end users to get better. But if they’re not provided with the right information from end users, it could lead to bias.

- The decisions that go into the design, development, deployment, evaluation, and use of AI systems collect systemic and human cognitive biases. There will always be a risk of bias. If a certain group of end users have similar ideals and are using AI systems, AI is going to learn from what that group of users is providing from an input standpoint and feedback standpoint. And that could lead to some bias risks.

- A systemic bias at the organizational level can influence how teams are structured and who controls the decision-making process throughout the AI lifecycle. These biases can also influence downstream decisions by end users, decision-makers, and policymakers and may lead to negative impacts.

- While using such a powerful tool, you tend to rely on it heavily and lean on it. When you’re using AI systems that will be making major decisions very rapidly on the fly, there might not be time for humans to take a step back and assess and factor. And so those decisions might be taken with 100% certainty that this is the right decision. And that influence is a major risk from who is making the decisions, like who is influencing the decision-making of the AI systems? Is it the right people? Are those people providing enough input to the system to be able to allow it to not have bias?

- From the section of the NIST AI-RMF standard, another major area about AI actors caught out in the AI RMF is that they perform human factors, tasks, and activities that can assist technical teams by anchoring in design and development processes user intentions and representatives of the broader AI community and societal values. These actors further help to incorporate context-specific norms and values in system design and evaluate end-user experiences in conjunction with AI systems. When it comes to the role of user versus AI actors—the people that are the designers, the developers, the purchasers of third-party AI services, and the end users—they are going to play major roles in the AI lifecycle. It is important to understand what sort of impact AI systems will have on these societal values and norms when it comes to organizations, communities, and nations relying on the processing that these AI systems are doing. So getting through all of the discussion statements of the NIST-AI RMF is basically built around four pillars.

- Govern: building a culture of risk management and cultivating it

- Map: recognize and identify related risks.

- Measure: identified risks are assessed, analyzed, and tracked.

- Manage: risks are prioritized and acted upon based on their projected impact.

Examples of AI security risk related frameworks:

Here is an interesting Microsoft AI security framework in terms of defining roles.

So this is the governed aspect of AI designers, AI administrators, AI officers, and AI business consumers. Each of those roles has a part to play in terms of the sort of responsibilities and requirements they have for the safety and trustworthiness of AI systems. This model makes sure of monitoring, so that any sort of metrics from the monitoring can be added when it comes to this AI-RMF measurability. So it makes sure that any sort of responsibilities and requirements are clearly stated for the AI business consumer. And inside of that too, you have training operationalized and inferencing, and that’s the cycle that Microsoft has determined when they have developed and deployed AI systems for their businesses and their customers.

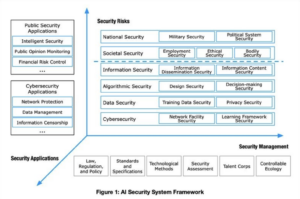

Another interesting framework comes from a key Chinese think tank called Digi-China.

It is broken down into three areas: security applications, security risks, and security management, and each of them is interdependent and independent of one another.

The Continuing Evolution Of AI

AI’s impact is profound and ever-expanding. The continuing evolution of AI is a remarkable testament to the relentless pursuit of innovation in the field of technology. AI, which once seemed like science fiction, has rapidly transformed into an integral part of our daily lives. However, this evolution also raises important ethical and societal questions, highlighting the need for responsible AI development and governance to ensure that AI technology continues to benefit humanity while minimizing potential risks.

Where is AI going in the short term?

There is going to be the standard arms race between attackers and defenders. So attackers will be unconstrained in what they can do. They will leverage AI tools to the maximum extent possible to cause damage and, in most cases, just make money for themselves however they can. And then defenders will always have to be more risk-averse because they’ve got something to protect and will have to move more slowly to onboard these systems. And perhaps the only thing that is going to push these in the opposite direction is the business need to leverage AI tools to enhance productivity and deliver value to customers. So you need to take a forward-leaning approach to using AI for both business and security use cases and know that either your competitors will be deploying AI or malicious attackers will be deploying AI.

Security and compliance teams can help the business teams deploy AI, keeping themselves up to date with the help of AI risk management courses, prebuilt products, etc.

How will AI evolve?

Since we get closer to artificial generative intelligence, there will be a major organizational restructure. If you have a system that can replace the jobs of 20 to 30 people just because you come with the cost, unfortunately, there’s also the risk of not having that human element to check in and check for correctness and bias in these AI systems. So there will be more emphasis on auditing them. Fortunately, or maybe not fortunately, a lot of the burden of auditing and the trustworthiness of AI systems is going to fall on that 1 % of AI service providers. Whereas if you’re using a third-party AI system, you will need to make sure that they have the necessary certifications and audits in order to use them. The supply chain risk is going to get more focus in terms of inventorying all of your usage of AI, whether it’s through third parties or whether it’s internally.

Is it better to wait until guidelines become clearer?

At least in the United States, we’re not going to see comprehensive AI legislation until 2025. But waiting for clarity from regulators on the gray areas may take a longer time, so you will have to find out along the way, through regulation and enforcement, what is acceptable and what is not. It would encourage people to move, to be forward-leaning, and to keep their eyes open. And as soon as these decisions and judgments come down, then that will help establish lanes in the road. But your competitors aren’t going to wait, and malicious actors are not going to wait. So don’t wait.

One of our tools, TrustShare, helps our customers automate some of the painstaking process of answering customer security questions. With an influx of security questions related to AI, the organization mapped out their usage and conducted a risk assessment. If you don’t answer the security question or if you don’t answer the AI security questions, you can lose the deal. So we’re starting to look into creating an AI security framework at TrustCloud to help our customers have controls in place for AI security and present those controls and any evidence to their own customers whenever these security questionnaires come in.

It may take years for proper legislation to be in place, but business is still moving, and they have those questionnaires you also need to keep on moving instead of waiting for clear guidelines for AI security.

In conclusion, the future of AI holds immense promise, and as it continues to evolve, its transformative power will shape the way we work, live, and interact with the world around us.